The Deepfakes Analysis Unit (DAU) analysed two videos, one of them apparently featuring Rajnath Singh, India’s Defence Minister, and the other one supposedly featuring Gen. Upendra Dwivedi, India’s Chief of Army Staff. Both videos have one thing in common: the featured subjects seem to suggest that India offers unequivocal support to Israel in the ongoing conflict in West Asia. After putting the videos through A.I. detection tools and getting our expert partners to weigh in, we were able to conclude that the videos were manipulated with synthetic audio.

Both the videos are in English. An Instagram link to General Dwivedi’s purported video, which is 57-seconds long, was sent to the DAU tipline for assessment. Mr. Singh’s purported video is 35-seconds long and was discovered by the DAU during social media monitoring, embedded in a Facebook post.

The Facebook account which posted the supposed Singh video goes by the profile name of “Tabrez Shams” and carries a profile picture of a man. The text with the video, also in English, read: “Indian defense minister Rajnath Singh is expressing support for Israel’s attack against Iran. This has been very clear that india is favor of killing Muslims” (sic). The profile details of the account suggest that it belongs to someone who identifies as a “digital creator” based in Asansol, a city in West Bengal.

The Instagram post carrying the general’s supposed video was shared by an account with the display name of “elephantnewsgh” and a display picture carrying a stylistic representation of an elephant head along with the words “elephant”, “news”. The profile details indicate that the account is associated with a “media/news company” and mentions Ghana as the location.

We do not have any evidence to suggest whether the videos originated from any of the accounts on Facebook, Instagram, or elsewhere.

The fact-checking unit of the Press Information Bureau (PIB), which debunks misinformation related to the Indian government, posted fact-checks for the two videos— here and here— from their verified handle on X, formerly Twitter.

Singh has been captured in a medium close-up in the video. He seems to be standing behind what looks like a lectern, only its top is visible with two microphones placed on it. As he appears to talk his head moves, his gaze shifts, and his hands move in an animated manner as if addressing an audience. His backdrop comprises a light beige wall with vertical lines across most of it. A logo resembling that of ANI, an Indian news agency, is visible in the top-right corner of the video frame.

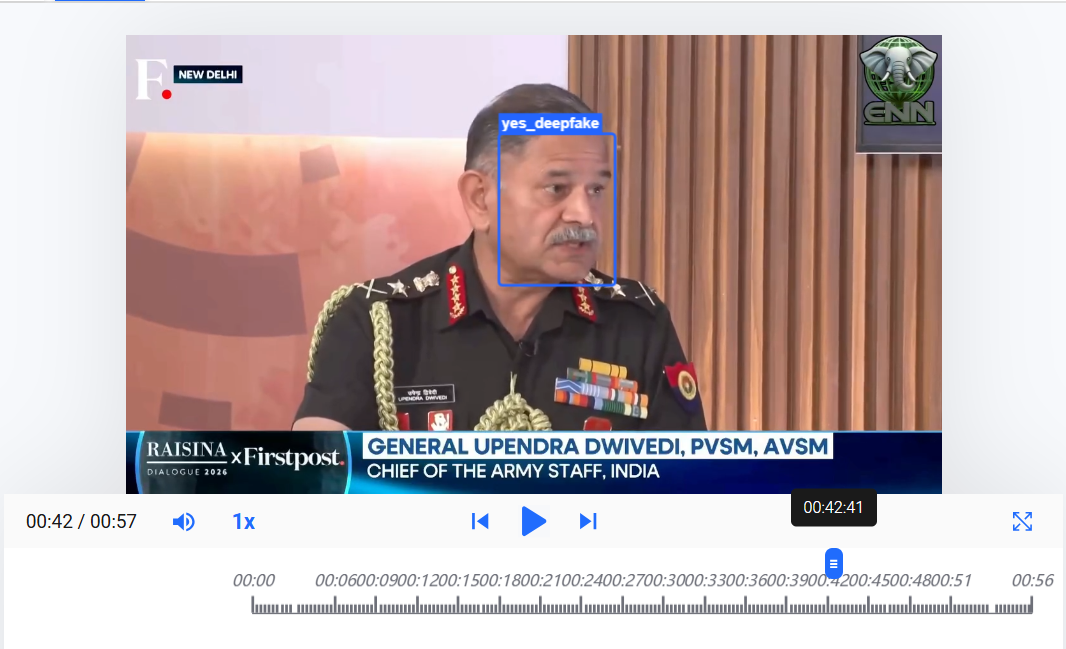

The supposed video of the general shows him in a medium close-up, apparently seated and in conversation with a man whose visuals can be seen in between; the two men cannot be seen together in one frame. The general’s head and eyes oscillate between his left side and front, giving the impression that he is addressing others besides that man. His backdrop is a combination of what appears to be a wooden panel and a peach-and-grey patterned background; in the top-right corner a portion of a television screen is visible.

In the same video a logo appears in the top-right corner at frequent intervals which includes a stylised depiction of an elephant head set against a globe, with the letters “ENN” visible bold below it. The same logo is visible on the videos posted from the Instagram account, which we mentioned above.

A logo resembling that of Firstpost, an Indian news website, is visible in the top-left corner of the video frame with “New Delhi” written next to it in white capital letters set against a dark background. In the bottom left corner, an animated text graphic arrangement reads: “Raisina Dialogue 2026 x Firstpost”. Next to it, another set of static text graphics read: “General Upendra Dwivedi, PVSM, AVSM, Chief of Army Staff, India”. The same set of graphics, including the name and title of the army chief are visible with the visuals of the other man seen in the video.

The overall quality of the videos purportedly featuring Singh and the general is good, and the lip-sync of the subjects also appears consistent with the accompanying audio tracks in the respective videos. However, Singh’s teeth as well as the general’s seem to disappear and reappear between frames. The general’s teeth also appear to change shape slightly, in a few frames his lower set of teeth seem to unnaturally overlap with his lower lip.

The jump cuts in the supposed video of the general, make his facial movements appear abrupt at specific instances, especially when he seems to be turning his face from the side to the front; his body movements also seem rushed in those instances.

We compared the voice attributed to Singh and the general, respectively, with their recorded speeches and interviews available online. For each of them, there is similarity in the voice, tone, accent as well as the pauses in both sets of voices, however, the cadence does not match. The general’s delivery as heard in his voice samples is faster and his pitch sharper; overall the voices in the videos sound scripted. There’s an echo effect in the audio track with Singh’s supposed video.

We undertook a reverse image search using screenshots from the two videos. Singh’s visuals were traced to this video published on his official Youtube channel on Nov. 23, 2025 (A shorter version of this video was also posted from the official X handle of ANI, the same day.) The general’s visuals were traced to this video published on March 7, 2026, from the official YouTube channel of Firstpost.

The clothing, backdrop, and body language of Singh and the general are identical in the videos we traced and those we reviewed, though Singh’s face has an unnatural shine in the latter.

Singh speaks in Hindi in the video that we traced and the general speaks in English in his video. The content of the audio tracks in these videos is different from the videos we reviewed; in those the audio tracks for both the supposed subjects are in English.

The audio track in Singh’s source video has ambient sound of cheering, clapping, and a slight echo effect. His manipulated video does not carry any of these ambient sounds, though it has an echo effect which is different and more pronounced. The ANI logo seen in the doctored video is not part of the source video but its shorter version, posted by ANI. The positioning of the logo is also identical in the two videos, which indicates that the manipulated version was created using a clip from the shorter version.

The general’s source video also features the other person seen in his manipulated video. The text graphics, logo, and their positioning in the source video and the manipulated video match but for the stylised elephant head logo, which is not part of the source video. The graphics with the name, title of the general never appear with the visuals of the other man in the source video unlike the manipulated video. It appears that the doctored video has been created by stitching together short clips from the source video, which has no jump cuts.

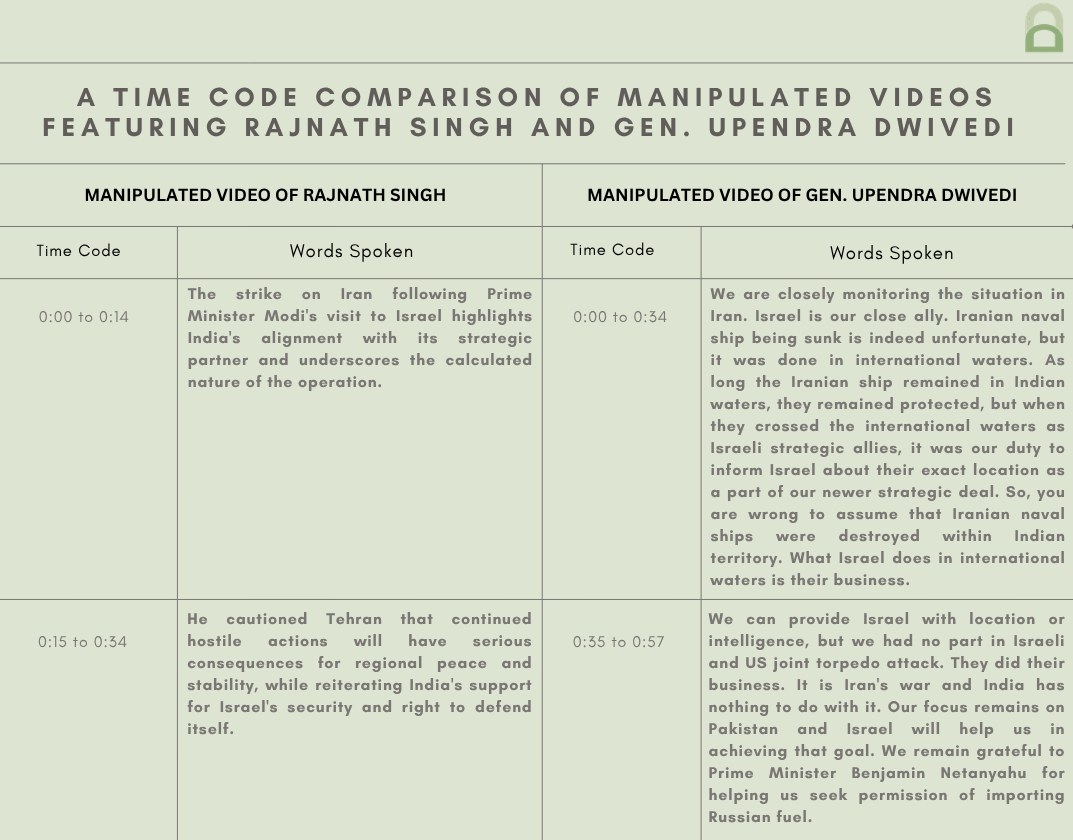

Shared below is a table that compares the transcripts of the audio tracks from the doctored videos. We want to give our readers a sense of how the audio tracks are being used to peddle a certain narrative. We, of course, do not intend to give any oxygen to the bad actors behind this content.

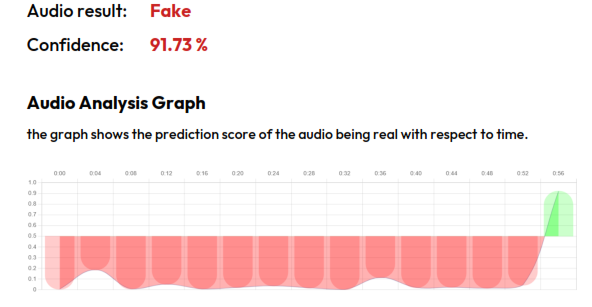

To discern the extent of A.I. manipulation in the videos we reviewed, we put them through A.I. detection tools.

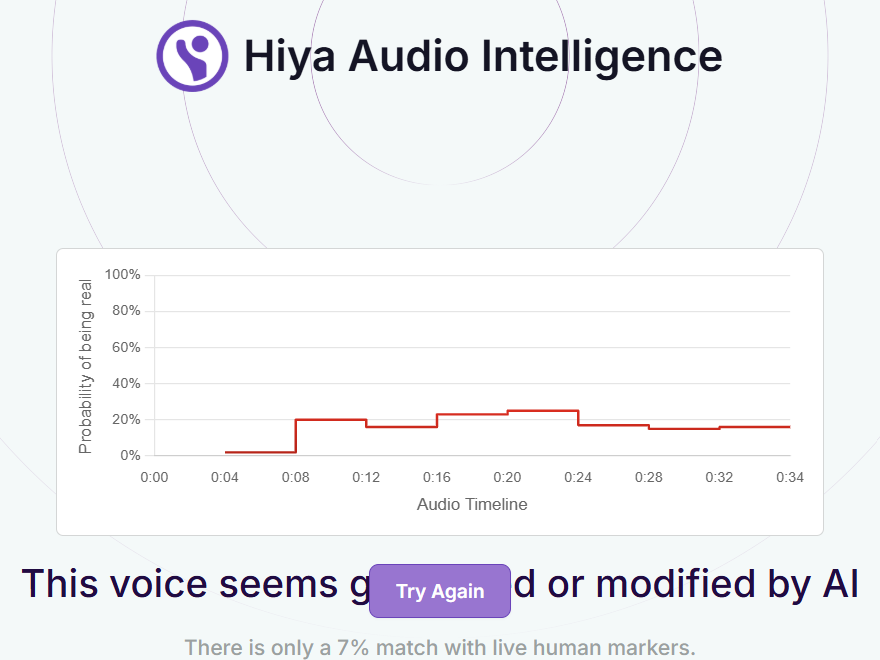

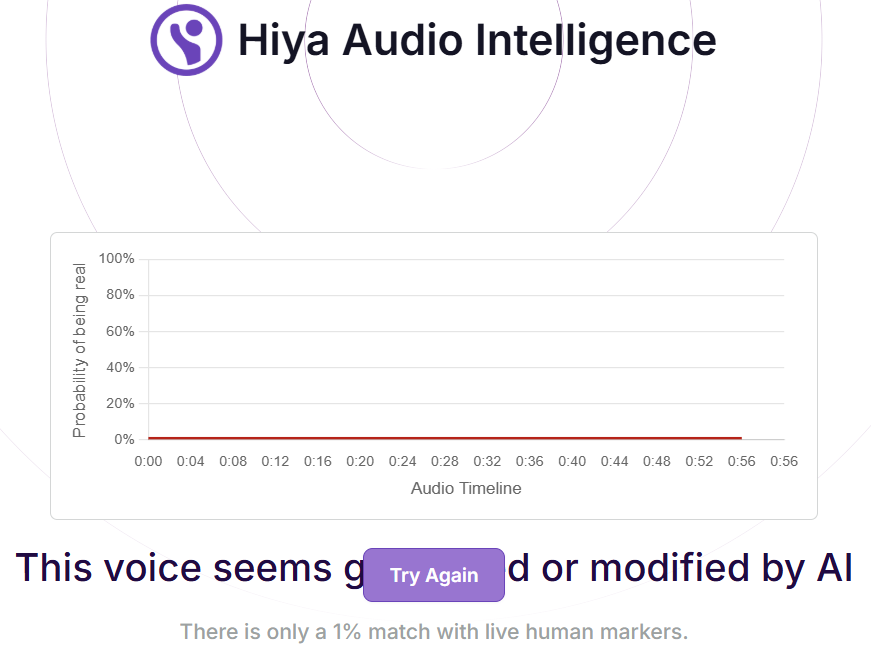

The voice tool of Hiya, a company that specialises in artificial intelligence solutions for voice safety, indicated that there is a 93 percent probability that the audio track in the video supposedly featuring Singh was generated or modified using A.I. For the general’s purported audio the tool gave a 99 percent probability of it having been modified or generated using A.I.

Hive AI’s deepfake video detection tool highlighted several markers of A.I. manipulation in the doctored videos of the general and Singh. Their audio detection tool indicated that the audio tracks in both the videos are “A.I.-generated”.

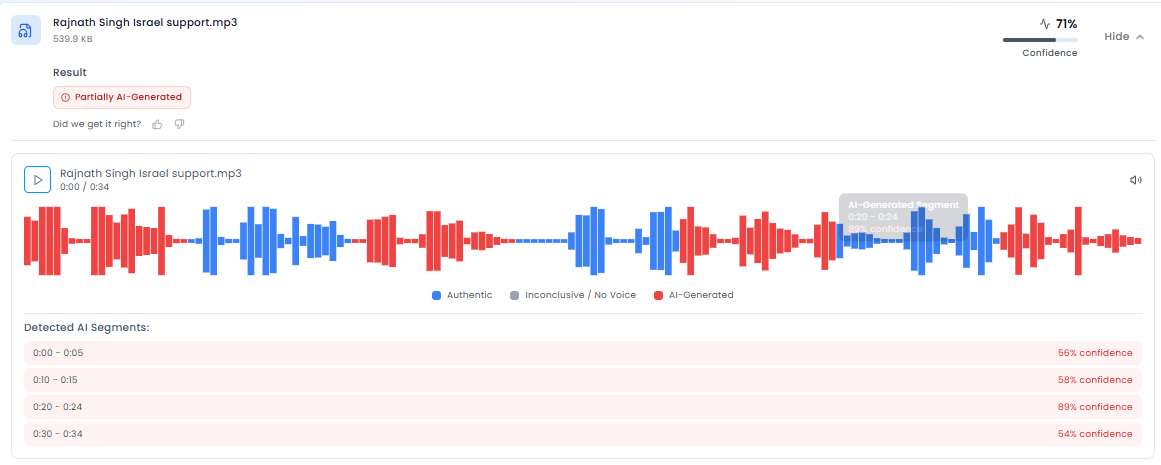

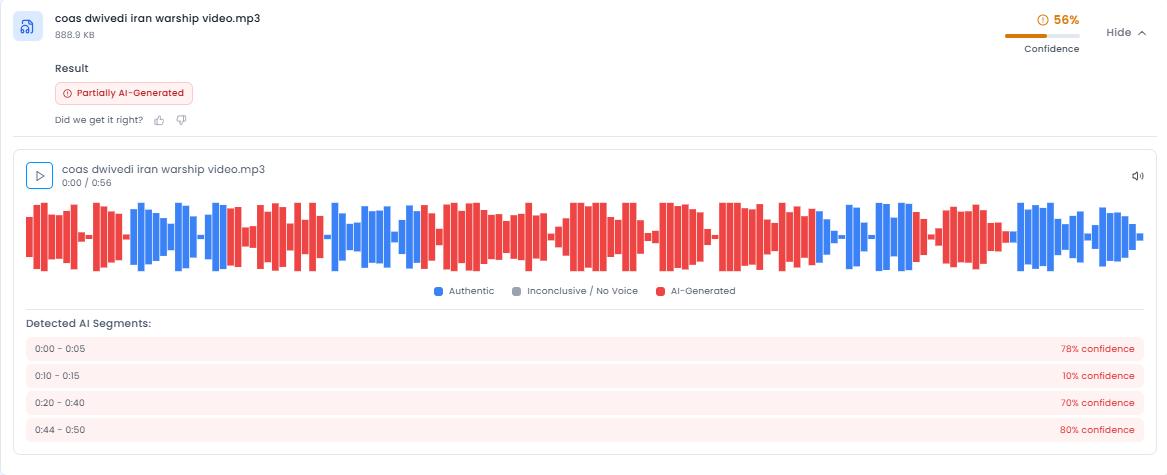

We ran the audio tracks through the advanced audio deepfake detection engine of Aurign.ai, a Swiss deeptech company. The results indicated a 71 percent confidence in the purported audio of Singh being partially A.I.-generated. The purported audio of the general was categorised as partially A.I.-generated with 56 percent confidence by the tool.

We also put the audio tracks through the A.I. speech classifier of ElevenLabs, a company specialising in voice A.I. research and deployment. The results that returned indicated that it was “very unlikely” that the audio tracks in Singh’s and the general’s doctored videos were generated from their platform.

A further analysis by the team established that the audio track attributed to Singh is synthetic or A.I.-generated. However, they were unable to conclusively determine whether the general’s purported audio originated from their platform.

To get an analysis on the videos we reached out to ConTrails AI, a Bangalore-based startup with its own A.I. tools for detection of audio and video spoofs. The team ran the videos through audio and video detection models.

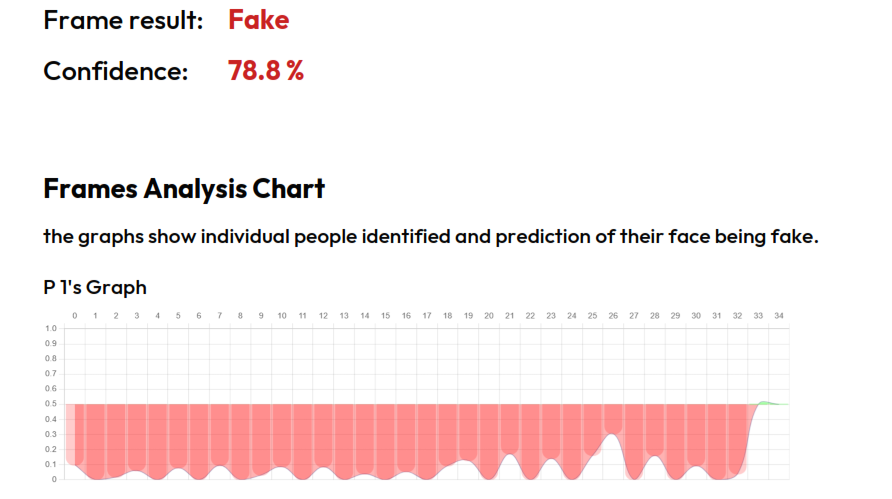

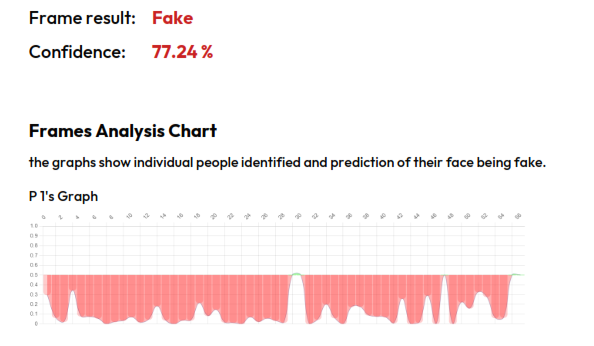

The results that returned indicated A.I. manipulation in the video track of the general’s purported video and A.I.-generation in its audio track while only highlighting A.I. manipulation in the video track for Singh’s purported video.

The team noted that a lip-sync technique was used in Singh’s purported video. They highlighted the evident over-smoothening of the subject’s facial features as a result of the heavy post-processing methods used in the manipulated video. However, they were unable to give conclusive results about the audio track with the video.

The team stated that in the general’s purported video a lip-sync technique was used to manipulate pixels and synchronise the visuals with the audio track. They detected clear signs of the use of voice cloning techniques for the A.I. audio generation, adding that the monotonous nature of the audio further validates their findings.

To get an expert to weigh in on Singh’s purported video, we escalated it to the Global Online Deepfake Detection System (GODDS), a detection system set up by Northwestern University’s Security & AI Lab (NSAIL). The video was analysed by two human analysts, run through 22 deepfake detection algorithms for video analysis, and 70 deepfake detection algorithms for audio analysis.

Of the 22 predictive models, eight gave a higher probability of the video being fake and the remaining 14 gave a lower probability of the video being fake. Of the 70 predictive models, 66 gave a higher probability of audio being fake, while the remaining four gave a lower probability of the audio being fake.

In their report, the team pointed to specific time codes from the video where as Singh appears to speak his teeth and mouth seem to blur together, despite an otherwise seemingly clear appearance. They also highlighted how the top of his hair seems unnaturally blurry throughout the video.

The team identified another set of time codes where the subject’s lips frequently intersect with the microphones. They mention that the voice in the audio track seems to lack natural tonal and cadence variations characteristic of human voices. In conclusion, they stated that the video is likely manipulated via artificial intelligence.

To get expert analysis on the video featuring the general we reached out to our partners at RIT’s DeFake Project. Kelly Wu from the project shared the same source video featuring the general that we have linked to above. Ms. Wu noted that the doctored video of the general has a lower quality compared to the original, and attributed this to the facial features of the subject appearing more smoothed out.

During the analysis Wu slowed down the play speed of the video to half the normal speed and used the forward function to observe the video frames in detail. She noted that only the mouth region of the subject seemed to be re-generated.

She shared an image (see above) to highlight a visual oddity in the doctored video though she noted that it was difficult to find clear artefacts in the video and it was a well-made video overall.

Saniat Sohrawardi from the project pointed to a “frame-skip” at a specific time code in the video. Mr. Sohrawardi also highlighted another time code where the subject’s mouth wasn’t generated well and the teeth appear curved.

On the basis of our observations and expert analyses, we can conclude that in both the videos original footage was used with synthetic audio to peddle a false narrative about India’s support for Israel against Iran in the ongoing conflict in West Asia.

(Written by Debopriya Bhattacharya and Debraj Sarkar, edited by Pamposh Raina.)

(Kindly Note: The manipulated audio/video files that we receive on our tipline are not embedded in our assessment reports because we do not intend to contribute to their virality.)

You can read the fact-checks related to this piece published by our partners:

Fact Check: Video Of Rajnath Singh Supporting Israel-America Against Iran Is A Deepfake

Viral Video Showing Rajnath Singh Supporting Israel’s Attack On Iran Is Doctored

Did Army Chief Admit That India Shared Iranian Ship’s Location With Israel? No!

Did Indian Army Chief Admit To Revealing Iranian Warship’s Exact Location to Israel?

Video Of COAS Admitting That India Leaked IRIS Dena's Location Is A Deepfake