As the conflict in West Asia unfolded, a new geo-political narrative began to take shape. It was not confined to the region but travelled across territorial boundaries and made its way even into India — through videos, images, and voices that were never quite what they seemed to be.

The common thread running through this wave of concocted content, viral on social media and closed messaging apps, was a suggestion that India was picking a side in the conflict, when it was not.

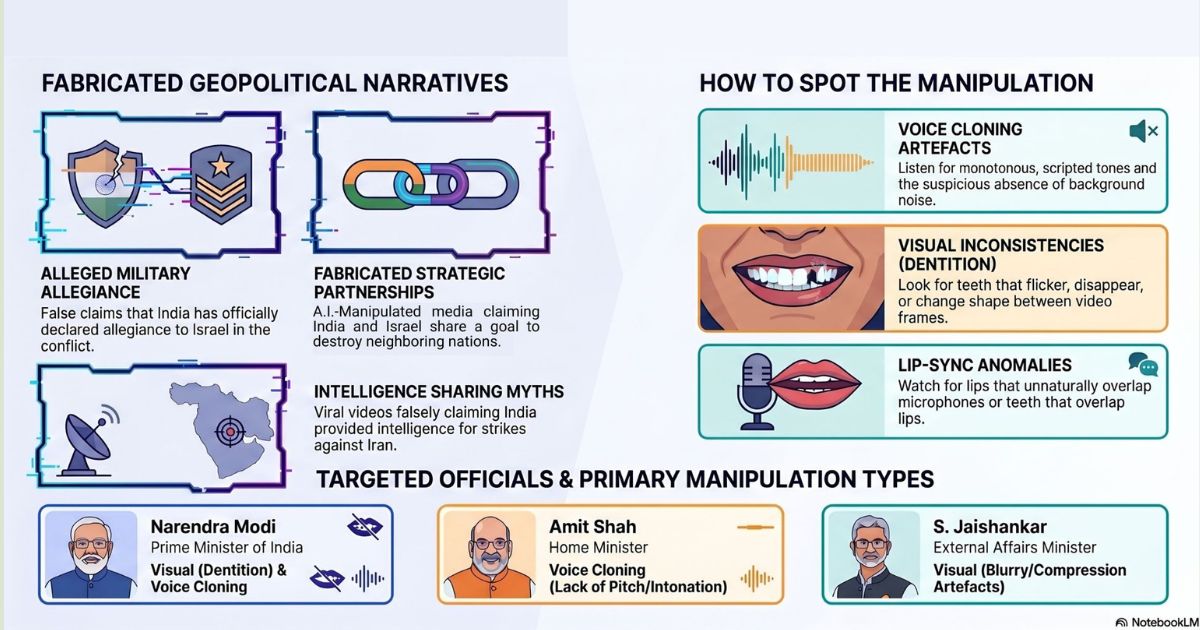

High-ranking Indian officials from the government and the defence establishment, as well as senior ministers apparently featured in the videos, which were widely used to peddle false claims. Original footage of the individuals targeted was reworked with synthetic audio to fabricate the content. A few images, generated or manipulated using A.I. were also circulating.

.jpg)

It is disconcerting how a parallel world of diplomacy is being unleashed through A.I. The ease and speed with which statements that were never made by Indian representatives are being misattributed to them, is alarming.

The dominant narrative strands that have emerged, so far, in the video content we analysed are as follows:

- India apparently declared allegiance to Israel in the West Asia conflict.

- Israel and India supposedly share a common goal of becoming bigger nation states, and to achieve that, Pakistan and Iran need to be destroyed.

- India purportedly provided intelligence to Israel for the strikes against Iran.

- Israel had supposedly granted billions of dollars in aid to the Afghan Taliban at India’s request, with India apparently intending to target Pakistan.

Similar audio and visual manipulation techniques were used in most of the fabricated videos we verified. The synthetic or A.I.-generated audio tracks were typically created using voice cloning techniques.

When we compared the speech attributed to the targeted individuals with their recorded speeches and interviews available online, the voices sounded identical, but the tone and cadence were noticeably different.

The visuals came with their own telltale signs. In several videos, the teeth of the subjects appeared to change shape between frames.

The lip-sync of the subjects in most of these videos was consistent with the accompanying audio tracks. Despite that, our expert partners were able to point out anomalies around their mouth region which were not obvious at first glance.

Spotting these visual artefacts, however, is only getting harder. Kelly Wu from RIT’s DeFake Project attributed this challenge to the poor quality of the source video used in some cases as well as the sophistication of the fabrications in other cases.

On Dr. Jaishankar’s manipulated video, Ms. Wu observed that “the video was fairly blurry, with the main character’s mouth movement and facial features hard to see clearly, so it may not be very reliable to claim whether the weirdness in the mouth or face region is due to manipulation or high compression.”

For the general’s manipulated video, Wu noted that it was difficult to find clear artefacts, and that it was a well-made video overall.

The sophistication of A.I. is only easing the narrative building, it yet again underscores the importance of A.I. literacy, especially now.

Kindly Note: The manipulated audio/video files escalated to us for analysis are not embedded here because we do not intend to contribute to their virality.

If you are an IFCN signatory and would like for us to verify harmful or misleading audio and video content that you suspect is A.I.-generated or manipulated using A.I., send it our way for analysis using this form.